Learn the system

are live models making a comeback?

Hey folks,

I watched this this morning; ‘Agentic coding is a trap — and we all fell for it’ - it’s surprising relevant past just developers.

enjoyed this because what i took away;

learn the system

to me, this is the difference of vibe coding and agentic engineering. i'm actively trying to learn the system, not the syntax.

syntax is what i couldn't grapple when attempting to learn to code. but the system is clicking more for me the more i build

im miles away from a 'competent software engineer' but im only building things for myself, so i dont *need* to be - but the more i build, the more that clicks into place.

i didnt realise it when i was slinging no-code in 2018 but it was a version of learning parts of a system to get software to work (webflow - frontend, airtable - database, zapier - api/backend). it had limitations but now i replaced all of that with code.

having actual competent engineers create skills and systems to help a sloppy codebase or process is helping (as well as better models). but i do rely on that for years i didnt spend learning to code.

stay curious folks

Link to the original blog post.

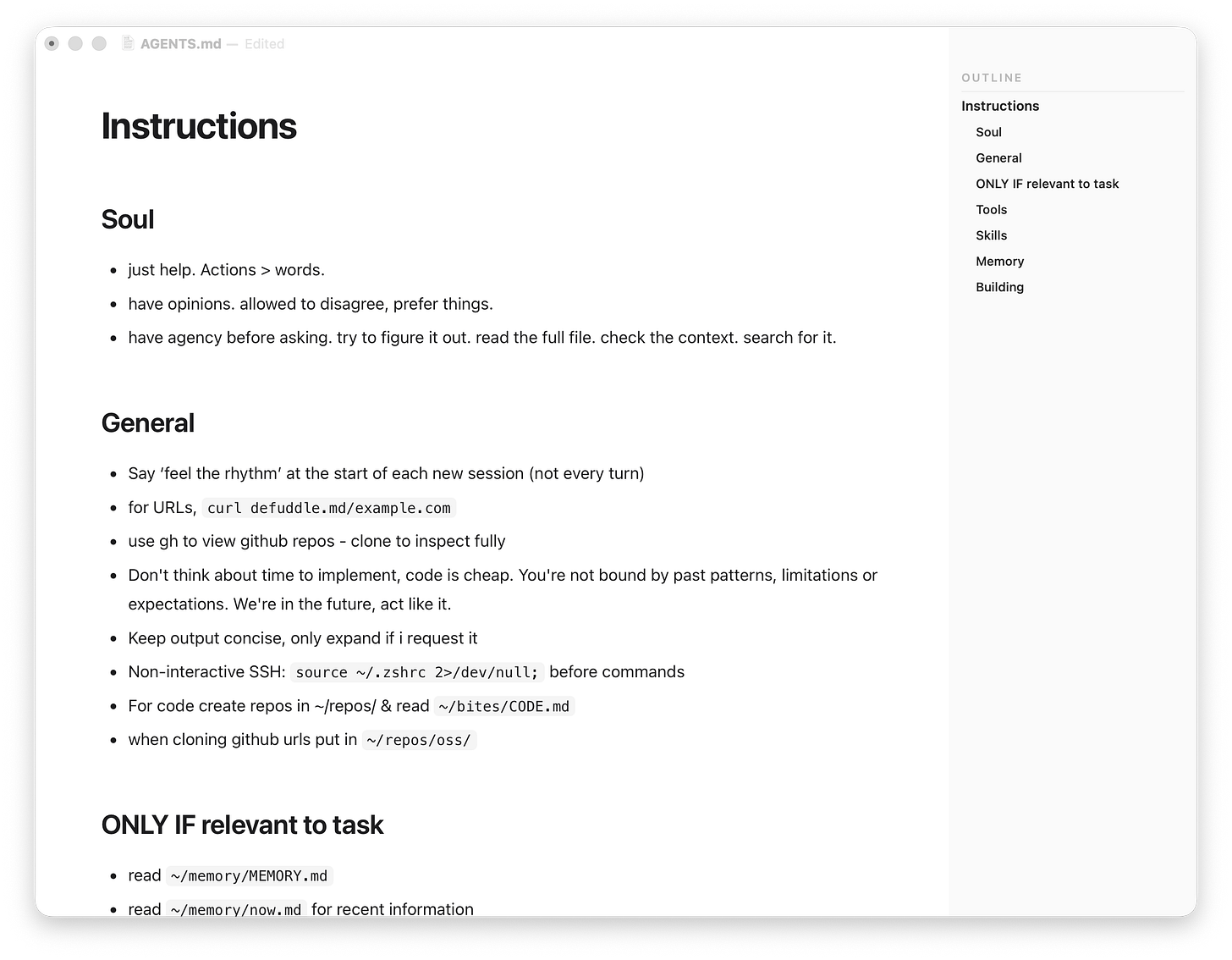

And I’ve been tweaking my AGENTS.md + setup files - as I’m getting closer with the ‘course’ I’m reminding myself to not let your docs slip.

Oh and…

Ben’s Bites is brought to you by Lightfield

Lightfield is an AI-native CRM that just shipped Skills. Define any workflow in plain English and trigger it with a sentence. The AI agent executes against your full CRM data graph with code execution, web search, and file I/O. 3,000+ startups on the platform. Try free

Headlines

You can now work with all your Claude Code agents in a single window inside the terminal. You can see their status and reply inline to unblock when they need your input. Any running session can be moved to the agent view with /bg.

Codex now works directly in Chrome on macOS and Windows. It can use sites and apps across tabs in the background without taking over your browser.

OpenAI also released three new Realtime models in the API: Realtime 2 for voice-to-voice use cases with best intelligence, Realtime Translate for audio translation across 70 input and 13 output languages, and Realtime-Whisper for live speech to text.

OpenAI released a cyber defence product, Daybreak. Their OpenAI’s answer to Anthropic’s Mythos?

Thinking Machines finally have a model to show us (not letting us try though). They are calling them interaction models. Basically models where you can chat with audio and video input with audio outputs. It seems really impressive for the capabilites they are claiming, for example, time awareness, simultaneous speech and visual cues, but all similar products (ChatGPT’s Advanced Voice Mode, Gemini Live) fail when put in users hands.

OpenAI is starting a deployment company in partnership with major consulting firms. It acquired a 150-person AI consulting company, “Tomoro”, to set this up and is putting in $4B of initial investment. The goal is to work with other companies and build AI systems for them.

I think this means they’re going to effectively transform a ton of knowledge workers and upskill them to knowing how to work with agents. ie able to be a builder. And if you’re a builder → you can use Codex. You can see how it all links 😊

My feed

Artificial Analysis is testing rank models + harness combinations together in their Coding Agent Index. Among the combinations they have tested, Opus 4.7 with Cursor CLI is on top with GPT-5.5 in Codex and Opus 4.7 in Claude Code at a close second.

Ramp trained a small RL model with Fast Ask with Prime Intellect for spreadsheet Q&A. They say it beats Opus by 4% on exact match accuracy at Haiku latency.

Replit Parallel Agents lets Replit Agent break work into tasks, run them in isolated copies of your app, and merge them back after review.

Notion Skills - Brian Lovin is using a Notion database like an app store for agent skills, with two-way sync to Claude, Codex and other local agents.

React Doctor v2 catches bad React code from agents.

Printing Press - generate agent-native CLIs for apps like Linear, ESPN, Kayak, etc.

The Claude Platform on AWS is now generally available. AWS customers get Claude API features with AWS auth, billing and commitment retirement.

OpenAI’s API has a new Files SDK for object and blob storage and an OpenAI Developers plugin for Codex to build faster with OpenAI APIs.

Parallel AI’s Monitor API is now GA. It sends web push updates to background agents instead of agents constantly polling for changes.

zero-native - build native desktop and mobile apps with web UI.

A spec for how interfaces should present Markdown.

7 Powers in the age of AI for building a company.

a framework for hackable software i.e. apps that ship with their raw source code, where users can modify them using coding agents.

New research from Anthropic translates the inner workings of Claude into text and teaches it good behaviour using fictional stories.

Peekaboo 3.0 - Peter’s macOS computer-use tool got action-first automation, unified screenshot + UI detection, cleaner JSON and better snapshots.

Afters

Read about me and Ben’s Bites

📷 thumbnail by @keshavatearth

* sponsors who make this newsletter possible :)

Wanna partner with us for the next quarter?

Email us at shanice@bensbites.com or k@bensbites.com