ChatGPT's Nano Banana

testing popular design tools

Hey folks, Keshav here.

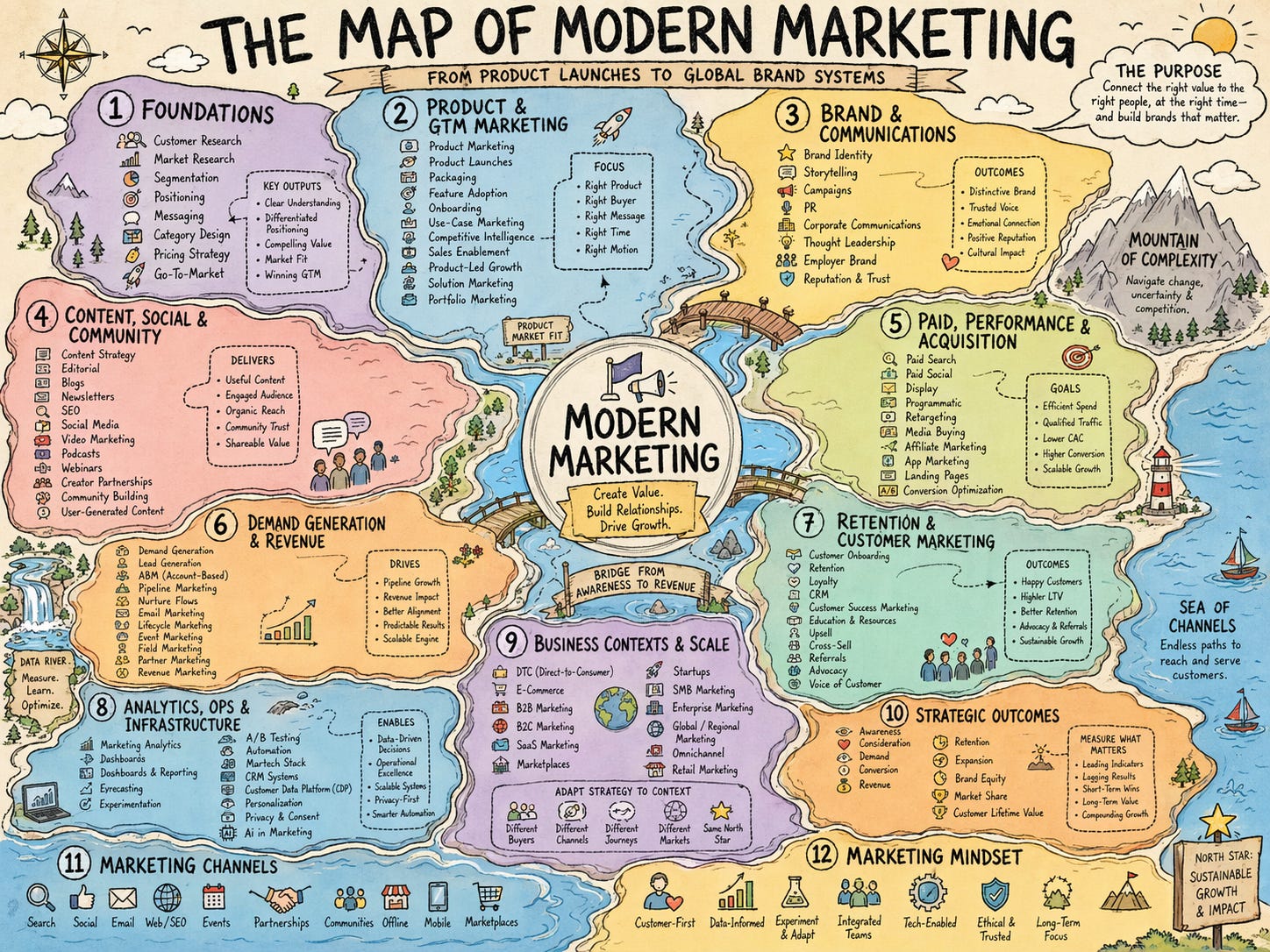

For a few months, it felt like Google had won the image generation space. But OpenAI is back in the game. ChatGPT Images 2.0 is miles ahead of anything. It’s beyond impressive at text, I haven’t seen any generation with typos, even with hundreds of words per image. See this example I created:

It’s also really good at creating realistic pictures, like this one of Professor Ben.

Oh, sorry, that one’s real. Ben was at Stanford this Wednesday, teaching how to build with AI agents.

Image generation is also available in the Codex app as a skill. Use it with thinking models to get the best results—that lets it think and use code/tool calls (like creating a QR from a link, searching logos from the web) and then use them as reference images. It can also create images, reflect on them and improve the generation.

People are creating realistic UI screenshots, multi-page illustrated magazines, personal style recommendations and creative QR codes using the new model.

The “generate UI as image” bit is interesting. Maybe there’s finally a solution to GPT-5.4’s lack of design taste. The latest coding models are fairly good at turning screenshots into code, but there are still gaps.

Last weekend, I tested a bunch of tools/models on implementing a design (for an ads storefront for Ben’s Bites) from a screenshot. I found:

Claude Design > Magicpath AI > Raw models (like Gemini 3.1 Pro/Opus 4.6 in their web apps), when it comes to understanding the concept and making something usable, not just copying the pixel-by-pixel look (ironically, Gemini won that).

When asked to turn designs into a real working app, there was a major drift in how the apps looked. Opus 4.7 did better than GPT-5.4 at visually matching the reference screenshot. Though GPT-5.4’s code was more functional, and the unseen pages (like the admin panel) had a consistent design with the rest of the app.

Also, in many cases, the assets (hero image, icons, background textures) make the UI in a “generated image” stand out. When replicating that UI from a screenshot, you get the barebones UI with the correct buttons and the layout, but without those assets, and the output falls short of expectations.

Ben’s Bites is brought to you by TinyFish

Funny how AI agents can write entire apps but can’t work on the live web. Playwright scripts break, raw fetches eat your context, bot detection blocks you, nothing’s scalable. TinyFish gives search, fetch, stealth browser, web agent, all managed in one API. Try it free. Comes with a CLI + Skill.

Headlines

OpenAI has a new product for Business, Enterprise and Edu users - Workspace Agents. Codex-powered agents inside ChatGPT with a persona, task and access to external tools (like Linear) and accessible for Slack as well. These agents will also replace custom GPTs down the line (finally). Read more.

Gemini Deep Research API now offers two configurations based on 3.1 Pro. It claims the best performance in web research and finding hard facts. Plus, it gets MCP support and can create charts using Nano Banana or HTML.

Cursor and SpaceX are working together - Cursor will train coding models on SpaceX’s GPUs and likely share them with xAI. SpaceX can, in turn, acquire Cursor later this year for $60B, or pay $10B for the partnership if it doesn’t. On a similar note, Thinking Machines also just signed a multi-billion-dollar Google Cloud deal.

Give your Droid a computer - You can now give your Droid an always-on machine with its own filesystem, credentials, and config for it to keep working on your tasks. This can be in the cloud (managed by Factory), or you can bring your own device.

My feed

Chronicle - Cursor for slides. Never build a deck from scratch again. Turn ideas into stunning presentations in minutes.*

ChatGPT for Excel and Google Sheets are now in beta - build new sheets, fix formulas, explain models, and update workbooks in place. (read more)

/ultrareview in Claude Code (research preview) lets you run bug-hunting agents in the cloud before merging riskier changes like auth, data migrations, or other critical code paths.

OpenAI built an open-source viewer for chat data and Codex session logs - Euphony.

Sierra is piloting an AI-native interview - debugging/review focused interviews where candidates improve a medium-sized codebase with coding agents.

ml-intern from Hugging Face - open-source research agent to come up with experiments, and run them.

Clawputer - Managed OpenClaw agent inside an always-on sandbox.

Kami - design skill for AI-native docs, resumes, portfolios, long docs, and slides.

noscroll - an AI that doomscrolls X for you and texts you just the signal. In my experience, this is easy to claim and hard to get right.

Monologue has a new Notes feature for thinking out loud when you don’t know the exact words you want to dictate.

Fin is moving beyond customer support into sales - using the same business context and integrations to qualify leads and book meetings.

Perplexity post-trained a Qwen-based model to handle search and tool calls for cheaper, and it’s already serving a meaningful chunk of traffic.

The next Slack won’t look like Slack, and Ando looks like one early attempt at that.

Frontend in 2026 - for and against the frameworks and abstractions dominant today.

Afters

Read about me and Ben’s Bites

📷 thumbnail by @keshavatearth

* sponsors who make this newsletter possible :)

Wanna partner with us for the next quarter?

Email us at shanice@bensbites.com or k@bensbites.com

Another hit fresh off the presses! It’s a reassuring feeling knowing Ben keeps me up to date.

I love the Marketing Map, particularly its style. Can you share the prompt or at least hints about how you did it?